Deconvolution Generative Adversarial Network Generating Endless Insects

Our motivation for this project was the generation of novel content with very small datasets. This is more difficult than applying/transfering style from one particular image to the content of another, but the results tend to be more “surprising” and it simplifies the process of experimenting by allowing us to generate and test the datasets output quite quickly.

At first we experimented with a tiny dataset of images from skyscrapers (250) in order to generate new scale-models of buildings:

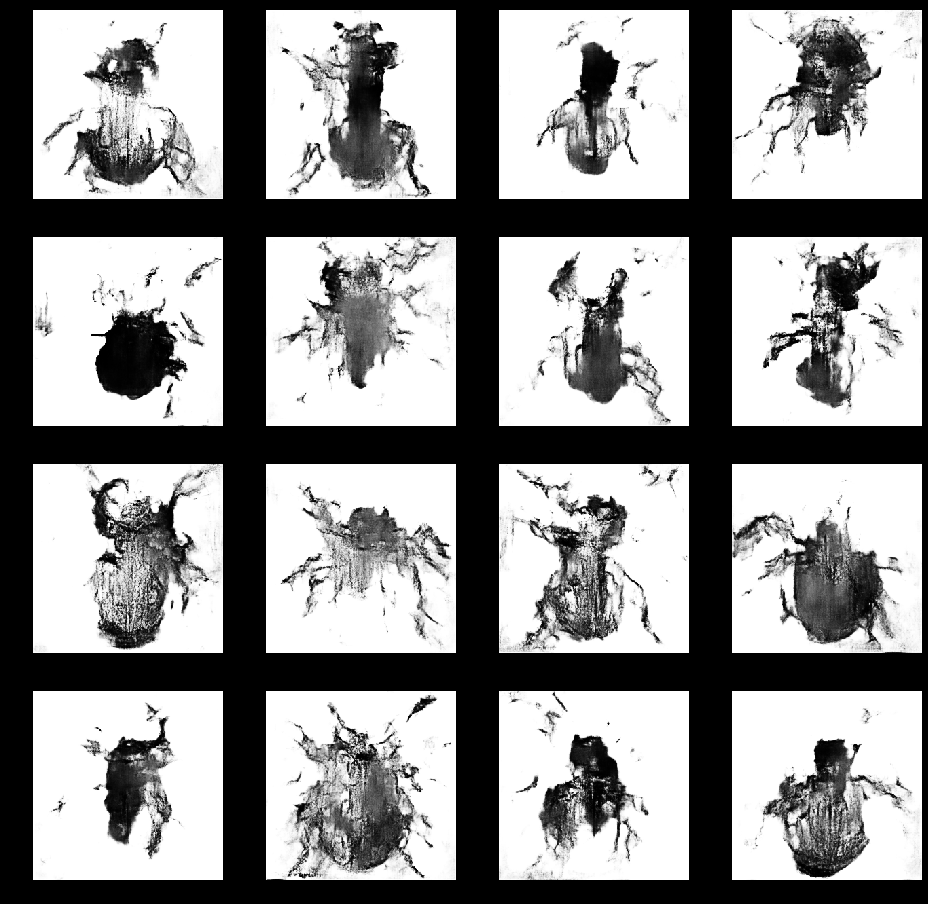

We found out that our models on this dataset were converging poorly as the dataset was too small but they did possess an “artistic” quality. We then trained (50min 51s) the model with very similar images of beetles (250) and the GAN started generating unequivocal “beetles”:

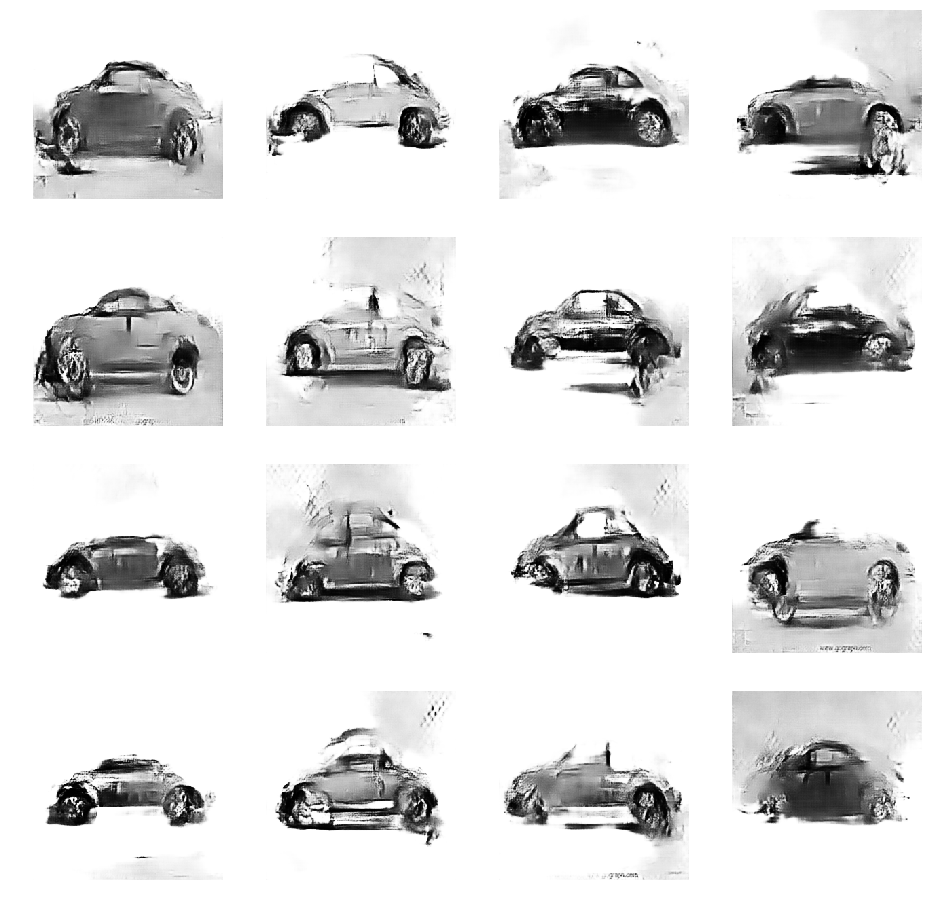

Then we trained (43min 51s) a new model with an even smaller set of images of (VW!) beetles (50) and the resulting fake beetles were really fun:

In a sense, current neural networks are only tools, albeit quite impressive, but the days of true autonomous creativity are getting closer. We’ll keep posting some of the results here, as it is quite interesting to explore the possibilities of synthetic content and eventually autonomous “artists” and “designers”.